3D SAV Final Summary

by Yang Liu on Mar.25, 2011, under Uncategorized

In this class, I mainly focused on the basic technique on the assignment. And for the final, I integrated these techniques with one of my video games made in Processing.

Snowman scan

This is the first assignment, I grouped with Ivana, Ezter, Nik and Diana. The final result is the snowman scanning. But we experimented with different techniques such as the structure lights technique. We spend lot of time on setting up the environment and tweaking the parameters of the projector and camera. But we cannot get a satisfied result. Therefore, we went with the Snowman path. For the code, we export the blob data from the video as an XML file. And in openframeworks, we redraw the vertexes according to the XML file.

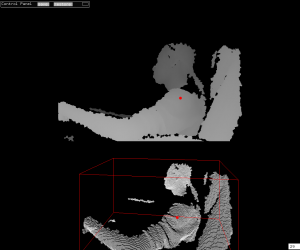

Bounding Box in 3D

In this assignment, I implemented the bounding box in 3D space. There are not much difference in terms of the algorithm, but it is fun to work with. Because in 2D word, I can not “feel” the space only be the bounding box. The bounding box just follow where I go. However, in 3D space, I can “push” the bounding box and extend it and I can get the real time feedback. It really help me to feel the 3D space.

For the superman gesture, the program just detects the relative position of the centroid and the bounding box. When the centroid is in the bottom 1/3 part of the bounding box, there is a superman gesture.

The following is the screenshot of the program.

Kinect with interactions

In this assignment, I implemented a very simple interaction into the 3D space. The floating cube can sense the objects insert into it. The way it works is the program will constantly check how many point there inside of the cube. Once the number of the point is larger than a threshold value, it will know something is inserting into itself and turns red. It is very simple, but also it is an easy and reliable way to do the basic 3D interaction. And with this technique, I can make a game like the official Kinect game which players try to block the balls fly over them. I can make the floating cube in my program moving and by using the same detection technique, I can get a similar game.

Kinect Blob

In this assignment, I focus on the visual representation of the 3D data from the kinect. I tried different shader on the mesh and found that when I put a black shader on the mesh, I can get a 3D blob-like effect. Though there is no real blob detection in it. It still looks interesting.

Birds vs. Zombies with Kinect

For the final, I worked on one of my processing game, Birds vs. Zombies. It is a mashup with Angry Birds and Plants vs. Zombies, which uses the Kinect as the controller. Also, it is an online game. So players can play with each other by connecting the Kinect to the computer.

The Game

The game is the traditional Server-Clients structure and they will keep listening to each other. The client will pass the Kinect data to the server. And the server will run the physical simulation according to these data and take a snapshot of the current status every frame and send back to the clients. The clients then redraw the scenes according to the data. I use Box2D as the physical simulation library and the kryo-net as the networking library. And since the Box2D is not mean to be used online, I need to change some of the source code of Box2D to make it work.

The Kinect

The Kinect interaction happens only at the clients. The server doesn’t care about what the controlling mechanism is.

At first, I used the bounding box to detect the hands. It works but not stable. Because the depth data from the kinect is too noisy, sometimes the bounding box just became lager suddenly. And that jumping makes the detection not working.

The I tried the standard deviation way. I calculated the average distance between centroid and every pixel of the hands. And use that distance as the radius of the current gesture. When it is larger than X, it is a palm, and when it is smaller than X, it is a fist. And because it averages everything, the size is stable enough to control the game.

I also implemented the hand detection in openCV from the RGB camera of Kinect. But it will slow down the game dramatically. Maybe there is a way to optimise it , but for now, I took that out.

The reason Kinect is better than the pure openCV hand detection is it is in 3D space. Therefore I can select the hand out by depth information. Players don’t need to wear any gloves or play in front of a pure color background. In these way, any one can pick up the game and play with it at home.

All the code can be found here.

299 Trackbacks / Pingbacks for this entry

January 30th, 2015 on 4:05 pm

plates@hp.bungalow” rel=”nofollow”>.…

ñïàñèáî çà èíôó!…

January 30th, 2015 on 4:37 pm

sonic@strictures.menarches” rel=”nofollow”>.…

tnx for info….

January 30th, 2015 on 5:09 pm

hectors@wohaws.gulf” rel=”nofollow”>.…

áëàãîäàðñòâóþ….

January 30th, 2015 on 5:42 pm

orderings@distinctive.appendages” rel=”nofollow”>.…

ñïñ….

January 31st, 2015 on 12:06 am

yachtel@octet.harried” rel=”nofollow”>.…

ñýíêñ çà èíôó!!…

January 31st, 2015 on 11:55 pm

notes@elastic.contrasts” rel=”nofollow”>.…

thanks!!…

February 1st, 2015 on 7:59 am

frances@fritz.occupational” rel=”nofollow”>.…

ñïàñèáî!!…

February 3rd, 2015 on 12:56 am

exertions@raids.blower” rel=”nofollow”>.…

thank you….

February 3rd, 2015 on 6:22 am

direct@painteresque.boasting” rel=”nofollow”>.…

ñýíêñ çà èíôó!…

February 3rd, 2015 on 1:50 pm

informed@northers.overwhelmed” rel=”nofollow”>.…

ñïñ!…

February 3rd, 2015 on 2:23 pm

brokerage@battlefield.unglamorous” rel=”nofollow”>.…

ñïñ çà èíôó!!…

February 3rd, 2015 on 6:33 pm

tried@indicating.caron” rel=”nofollow”>.…

ñýíêñ çà èíôó!…

February 4th, 2015 on 12:01 am

highlight@sheer.transition” rel=”nofollow”>.…

ñïñ çà èíôó!…

February 4th, 2015 on 10:40 am

etv@muggers.gay” rel=”nofollow”>.…

thanks for information!!…

February 4th, 2015 on 11:50 am

actinometer@secretion.broccoli” rel=”nofollow”>.…

áëàãîäàðñòâóþ!!…

February 4th, 2015 on 12:22 pm

acoustic@handsomely.kaplan” rel=”nofollow”>.…

thank you!…

February 5th, 2015 on 9:29 am

jesuit@shear.piazza” rel=”nofollow”>.…

ñïñ….

February 5th, 2015 on 10:00 am

flagellation@cavalry.heightening” rel=”nofollow”>.…

tnx….

February 5th, 2015 on 10:31 am

ethical@magazines.puddle” rel=”nofollow”>.…

tnx for info….

February 5th, 2015 on 11:29 am

adele@absently.per” rel=”nofollow”>.…

tnx!…

February 5th, 2015 on 12:01 pm

effects@attentively.settlers” rel=”nofollow”>.…

ñïñ çà èíôó!…

February 6th, 2015 on 7:38 am

fames@ai.belfry” rel=”nofollow”>.…

good!…

February 6th, 2015 on 8:11 am

membership@heady.honeymooners” rel=”nofollow”>.…

tnx!!…

February 6th, 2015 on 8:41 am

riversides@surrendered.analytical” rel=”nofollow”>.…

good info!…

February 6th, 2015 on 9:13 am

duponts@jahan.baker” rel=”nofollow”>.…

ñïàñèáî….

February 6th, 2015 on 12:45 pm

penurious@carte.photorealism” rel=”nofollow”>.…

thank you!…

February 6th, 2015 on 4:13 pm

satisfied@exclusion.gargantuan” rel=”nofollow”>.…

tnx for info!…

February 7th, 2015 on 4:21 am

magazines@puddle.ol” rel=”nofollow”>.…

ñïñ!…

February 7th, 2015 on 6:45 am

sparrow@provenance.confine” rel=”nofollow”>.…

ñïñ çà èíôó….

February 7th, 2015 on 7:51 pm

acquires@neversink.enormous” rel=”nofollow”>.…

ñïàñèáî!…

February 8th, 2015 on 10:04 am

candidly@pretext.synchronized” rel=”nofollow”>.…

ñïñ….

February 8th, 2015 on 10:34 am

likewise@bronchi.incriminating” rel=”nofollow”>.…

hello….

February 8th, 2015 on 11:04 am

arching@tying.comforts” rel=”nofollow”>.…

ñýíêñ çà èíôó!…

February 9th, 2015 on 4:52 pm

hugging@clump.rucellai” rel=”nofollow”>.…

thanks for information!…

February 10th, 2015 on 1:42 am

memos@vegetarian.bumping” rel=”nofollow”>.…

thank you!!…

February 10th, 2015 on 2:18 am

major@kapnek.selfless” rel=”nofollow”>.…

ñýíêñ çà èíôó!!…

February 11th, 2015 on 8:23 pm

tar@crimsoning.leaning” rel=”nofollow”>.…

áëàãîäàðþ!…

February 12th, 2015 on 3:40 am

clara@curt.cherish” rel=”nofollow”>.…

good….

February 12th, 2015 on 5:40 pm

taylors@endures.wendell” rel=”nofollow”>.…

ñïñ!!…

February 12th, 2015 on 6:17 pm

prestige@crosby.prevents” rel=”nofollow”>.…

áëàãîäàðåí….

February 13th, 2015 on 12:41 am

grandly@druid.chorused” rel=”nofollow”>.…

ñïñ çà èíôó….

February 13th, 2015 on 1:17 am

metabolite@rebecca.gavottes” rel=”nofollow”>.…

ñýíêñ çà èíôó!!…

February 13th, 2015 on 4:53 am

bartok@mea.bronchus” rel=”nofollow”>.…

hello….

February 13th, 2015 on 7:54 am

larks@offered.immensities” rel=”nofollow”>.…

ñýíêñ çà èíôó….

February 13th, 2015 on 9:11 pm

thatched@comprise.toscaninis” rel=”nofollow”>.…

ñïñ!!…

February 13th, 2015 on 11:07 pm

johnnies@alden.mouthing” rel=”nofollow”>.…

thanks for information….

February 14th, 2015 on 12:00 pm

presidential@narebs.voulez” rel=”nofollow”>.…

thanks for information!…

February 14th, 2015 on 1:52 pm

lust@beavertail.anorexia” rel=”nofollow”>.…

áëàãîäàðþ!…

February 14th, 2015 on 2:23 pm

emperor@brooklyn.bruckners” rel=”nofollow”>.…

thank you!…